Pattern: Hybrid Tenancy

Written on

Mixing single and multi-tenancy models

Where Multi-Tenancy systems co-exist multiple customers on the same service instances, and Single Tenancy ensures that each customer gets their own instance, hybrid tenancy blends these approaches inside a single system, aiming to get the best of both worlds.

Let us consider a domain which is likely to involved some fairly sensitive data, the medical industry. Our system handles enrolling new patients at the clinic, scheduling appointments, and storing lab results. We are so concerned about the sensitivity of the lab data that each patient's records are stored in a separate instance of the Labs Service. Patient enrollment however is handled by a single service, as we only capture non-medical data here, and likewise we consider it acceptable to have a single appointments service.

One of the problems here is that while our data may be isolated inside the service, as it is exposed over an API boundary then we may still have concerns about how readily multiple customers data can be accessed at once, or the chance that one customer can see another customer's data. If only small parts of the data is being exposed at a time via an API however, these concerns may be adequately addressed - but it may need to be taken into account when designing the API itself.

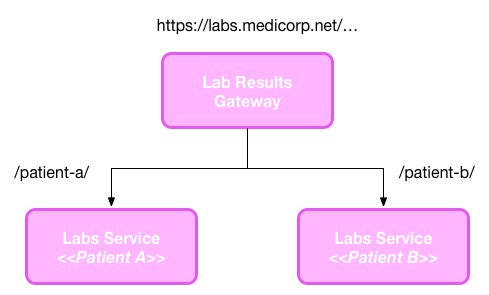

When mixing these tenancy models, in the intersection between the single and multi-tenancy parts of our system, some form our routing is required to ensure that requests for a given customer get to the right place. It is much cleaner if this can be done transparently to the client. This could be done using something like a web proxy, as seen in this extremely simplistic example:

The above example assumes that the key to determine which instance of the service to access is stored in the requested URI, but we may instead make use of headers or authentication tokens, in which can we may be asking our gateway to do more work for us.

As with single tenancy, the cost of keeping separate instances of a service running per customer could be addressed if we could launch per-customer instances on demand. The gateway shown above is an obvious place where the logic could live for starting (and stopping) instances.